-

Posts

10 -

Joined

-

Last visited

-

UsefullPig changed their profile photo

UsefullPig changed their profile photo

-

Hitman 2 has some really good concept art and 3D renderings. I love the art style of that game. https://www.artstation.com/search?q=hitman 2&sort_by=relevance

-

I feel insane trying to solve this issue

UsefullPig replied to UsefullPig's topic in Gaming in general

For anyone who stumbles upon this thread, here is how I fixed my problem: -

UsefullPig started following We're finally getting integer-ratio scaling! and THE GUI SHOULD BE BETTER

-

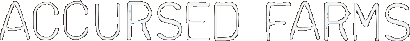

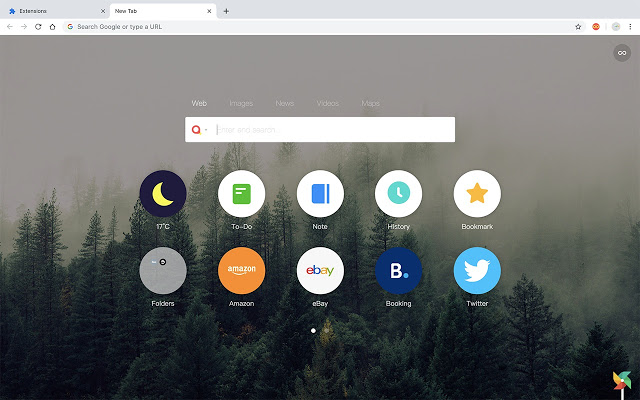

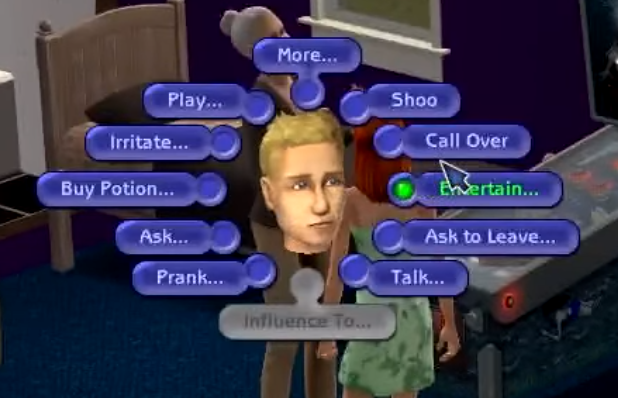

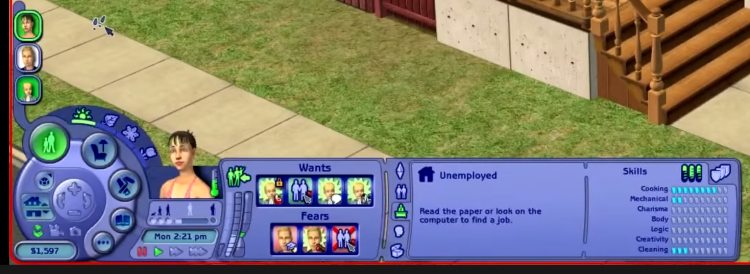

Well I guess I'll throw my hat into the ring. I've watched the new video twice now so I have some thoughts. Infinity New Tab I love the Chrome/Firefox Extension "Infinity New Tab". It takes a bit of tweaking to look good, but it's absolutely pleasant for me to use with my current setup. These website icons are massive landing strips, as opposed to the tiny helipads bookmarks are. This is my setup: This is what it looks like by default: The Sims 2 TS2 has my favorite game UI that I can think of. The circle launcher Ross envisioned in the video is present here, and it's contextual too. You can even Shift+Click for debug options if you enable testing cheats. Also look at the main part of the GUI: Notice that that buttons are sized relative to importance. And I love that it's laid out from left to right in sperate section that can grow or generate new sections next to them. Look at it in a collapsed view: Hotkeys I largely hate hotkeys. Ross's sentiment resonates with me. "Better learn those hotkeys. Hup hup hup" is what a lot of Adobe Premiere tutorials look like. I wish the Sonder Keyboard existed already but it looks like it's never going to come out. I use these on the regular: Win+Shift+S to take screenshots. I used this one a lot while making this post. Alt+Tab to switch programs. Holding down Alt+Tab is actually why I don't have that big of a problem with the taskbar. Alt+\ is what I use on Discord to deafen myself. Ctrl+Shift+T to open up the tab I just closed. Mobile UI/UX Google copied iOS 11 when they made Stock Android 9 and they made it better. I honestly hate using Android 8 and lower thanks to Google's overhaul to the UI. Android 10 made it even better. I'll say this: I am just less frustrated when I use my Pixel than when I use my iPad. There are so many little touches that Android 10 has that it sometimes makes me feel like a "GUI Wizard" when I use it. Alright lads I'm too tried to keep writing this. I look forward to any replies. Edit: Spelling. Also, I'd be happy to expand on the tiny details Stock Android 10 has that make it a treat to use, and how good TS2's UI functions for what its trying to accomplish. But later...

-

I feel insane trying to solve this issue

UsefullPig replied to UsefullPig's topic in Gaming in general

Ah, yeah that's on how to force the game to give you all the resolution options because it doesn't recognize modern graphics cards and defaults to settings meant for trash tier processors from 2004. A lot of things about this game are broken on modern systems. -

The Sims 2 was clearly not programmed to work on high resolution screens. I feel like there's some value I could change in some config file or some obscure program that could fix this problem. Would you guys know of any programs or how to search for that value? I know I can just set the resolution lower, but everything ends up being blurry and I feel like I'm not wearing my glasses. This is TS2 on a 4k display

-

We're finally getting integer-ratio scaling!

UsefullPig replied to UsefullPig's topic in Computer Hardware

So real time ray tracing doesn't really have anything to do with the new Turing architecture? I've noticed that my 1080 has a perfectly fine time doing path-traced shaders in Minecraft with the new SUES PTGI shaders. -

We're finally getting integer-ratio scaling!

UsefullPig replied to UsefullPig's topic in Computer Hardware

On all currently supported cards? If so that's fantastic -

I've been playing Hitman™ 2. I could go on about the superb mechanics and sleek aesthetics, but I'll sum it all up with a quote from the Deus Ex: Invisible War video, "I love games that involve you hiding bodies from the authorities and watching people freak out if they see you" Also, in the Deus Ex: Human Revolution episode, Ross talks about how Eidos Montréal shouldn't be making Deus Ex and some other studio should. I think Hitman™'s studio, IOI, is the perfect studio to pick up the torch. Hitman™'s mechanics are similar to Deus Ex's from what I've seen so it shouldn't be too hard for them to make a Deus Ex game with good mechanics. All they would need to do is hire good writers because Hitman™'s story sucks (Also they could do a reboot on the series and call it "Deus Ex™" lol) (Also also, I noticed that IOI slightly changed the HUD elements in Hitman™ 2 recently, and the latest Game Dungeon episode has me paranoid that they're gonna mess with the graphics in a bad way one of these days)

-

..but only on the most advanced CPUs and Graphics cards in the world. Official Announcements: Intel: https://software.intel.com/en-us/articles/integer-scaling-support-on-intel-graphics nVidia: https://www.nvidia.com/en-us/geforce/news/gamescom-2019-game-ready-driver/ A better summation: http://tanalin.com/en/articles/lossless-scaling/#h-progress TL;DR Intel is only supporting supporting integer-ratio scaling on the integrated graphics of their 10th and 11th gen CPUs. nVidia is only supporting it on graphics cards with the new Turing architecture. I can't believe that a simple algorithm will not be implemented on my GTX 1080, and as such this feature will be unavailable to me for a long time because I'm not buying another top of the line graphics card for several years.

×

- Create New...

This website uses cookies, as do most websites since the 90s. By using this site, you consent to cookies. We have to say this or we get in trouble. Learn more.